HappyHorse 1.0 Review 2026:

Is This the Best AI Video Generator Right Now?

We tested HappyHorse 1.0 across 180+ text-to-video and image-to-video generations, including product demos, social ads, cinematic scenes, and audio-synced clips. The result: it delivers some of the strongest AI video quality we've tested, especially for creators and marketers who need fast, usable 1080p output.

Overall Score

9.3 / 10

Standout Feature

Native audio-video generation

Best For

Social videos, product demos, cinematic clips

Free Trial

Available with real generation credits

No subscription required · One-time credits · Commercial use on paid plans

Text-to-Video

From prompt to cinematic clip

Generated from text prompt · 8s · 1080p

Image-to-Video

Animate a product photo in seconds

Generated from one product image · 8s · 1080p

Audio Sync

Generate video with native synced sound

Generated with native audio sync · 10s · 1080p

Should You Use HappyHorse 1.0?

Best for

- • Social media creators

- • Product marketers

- • E-commerce teams

- • Independent filmmakers

- • Multilingual content creators

Use with caution if

- • Teams that require 4K output

- • Strict open-source model weights

- • Enterprise-grade provenance documentation

Starting point

Free credits available. Paid credit packs start at $9.9. No subscription required.

Free to Try, No Subscription Required

HappyHorse AI uses one-time credit packs instead of monthly subscriptions. Start with free credits, then upgrade only when you need more generations.

Free

Try real video generation before paying.

Starter

$9.9 · 99 credits

Basic

$29.9 · 330 credits

Professional

$99.9 · 1,250 credits

Quick Verdict — Should You Use HappyHorse 1.0?

Yes — HappyHorse 1.0 is worth trying for most creators, marketers, and video teams.

In our 180+ generation test, HappyHorse 1.0 performed especially well in three areas: text-to-video prompt adherence, image-to-video source fidelity, and native audio-video synchronization. It is strongest for social ads, product demos, cinematic short clips, and multilingual content.

In blind human preference testing, HappyHorse 1.0 ranked #1 for both T2V and I2V, which means users consistently preferred its outputs over major competing AI video models.

The main limitations are clear: output is capped at 1080p, model weights are not public, and enterprise teams may need stronger provenance documentation.

For most production workflows, the free trial is worth testing before choosing another AI video tool.

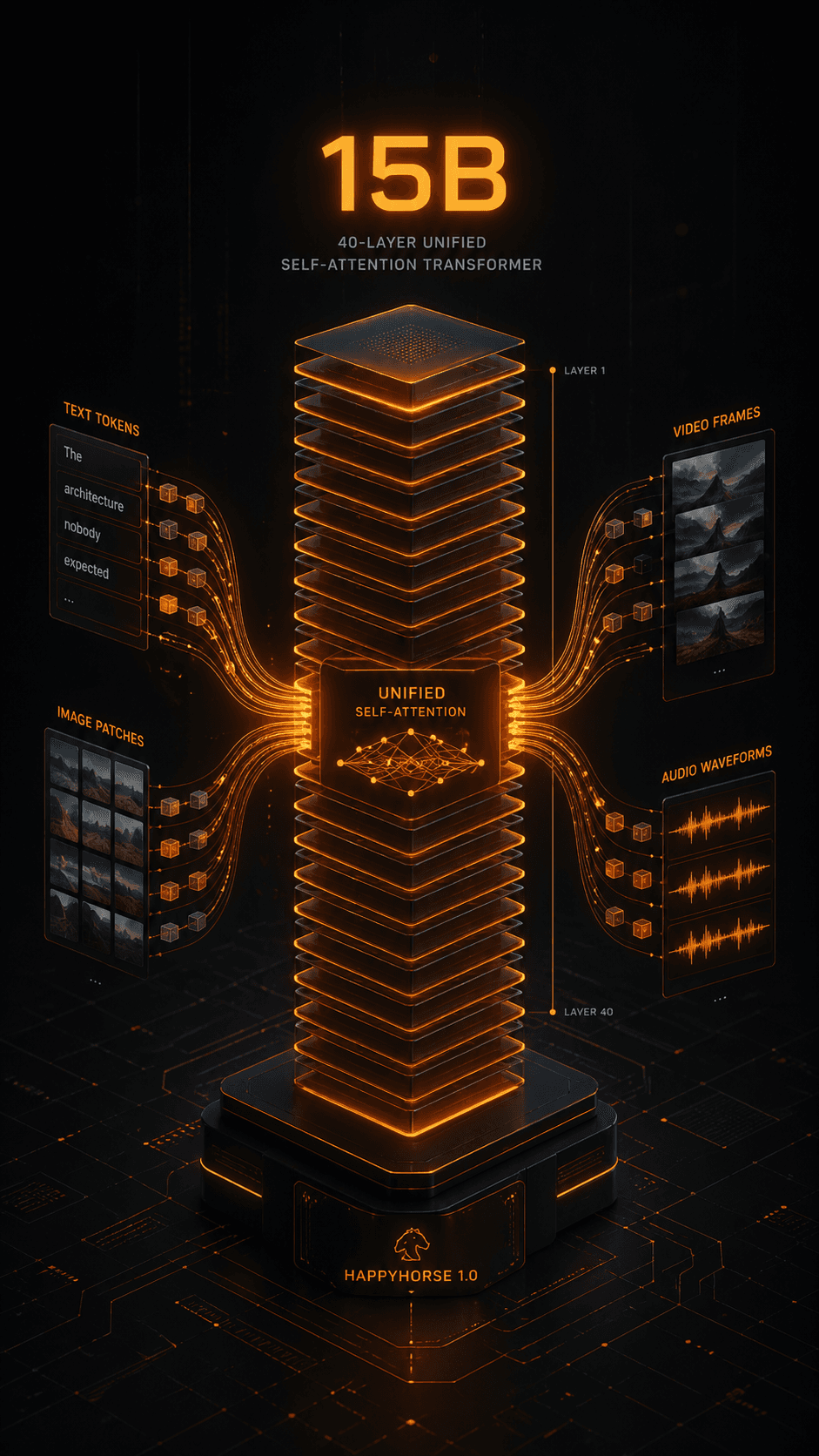

What Is HappyHorse 1.0?The Architecture Nobody Expected

The Architecture Nobody Expected

HappyHorse 1.0 is an AI video generation model built on a 15-billion parameter, 40-layer Unified Self-Attention Transformer — a design that differs fundamentally from the dominant architectures in AI video generation.

Most leading AI video models (including the Wan series from Alibaba and many DiT-based systems) use Diffusion Transformer architectures with Cross-Attention mechanisms to connect separate text, image, and audio encoders to the video generation backbone. This design adds complexity, increases parameter counts, and creates integration seams between modalities. HappyHorse 1.0 uses no Cross-Attention. Instead, it ingests text tokens, image patches, video frames, and audio waveforms into a single unified token sequence that passes through the same 40-layer self-attention stack.

01

ARCHITECTURE

15B Unified Self-Attention Transformer with 40 layers.

02

INFERENCE

8 denoising steps, no CFG required. Extremely fast generation.

03

NATIVE AUDIO

Joint audio-video generation in one pass.

04

LANGUAGES

6 prompt languages, 7-language native lip sync.

Key Technical Specifications

| Parameter | Value |

|---|---|

| Architecture | 15B Unified Self-Attention Transformer |

| Layers | 40 |

| Cross-Attention | None (unified token sequence) |

| Inference Steps | 8 denoising steps |

| CFG Required | No |

| Max Resolution | 1080p (1920×1080) |

| Frame Rate | 30 FPS |

| Native Audio | Yes (joint audio-video generation) |

| Lip Sync Languages | 7 (Mandarin, Cantonese, EN, JA, KO, DE, FR) |

| Prompt Languages | 6 (ZH, EN, JA, KO, DE, FR) |

| Generation Modes | T2V, I2V |

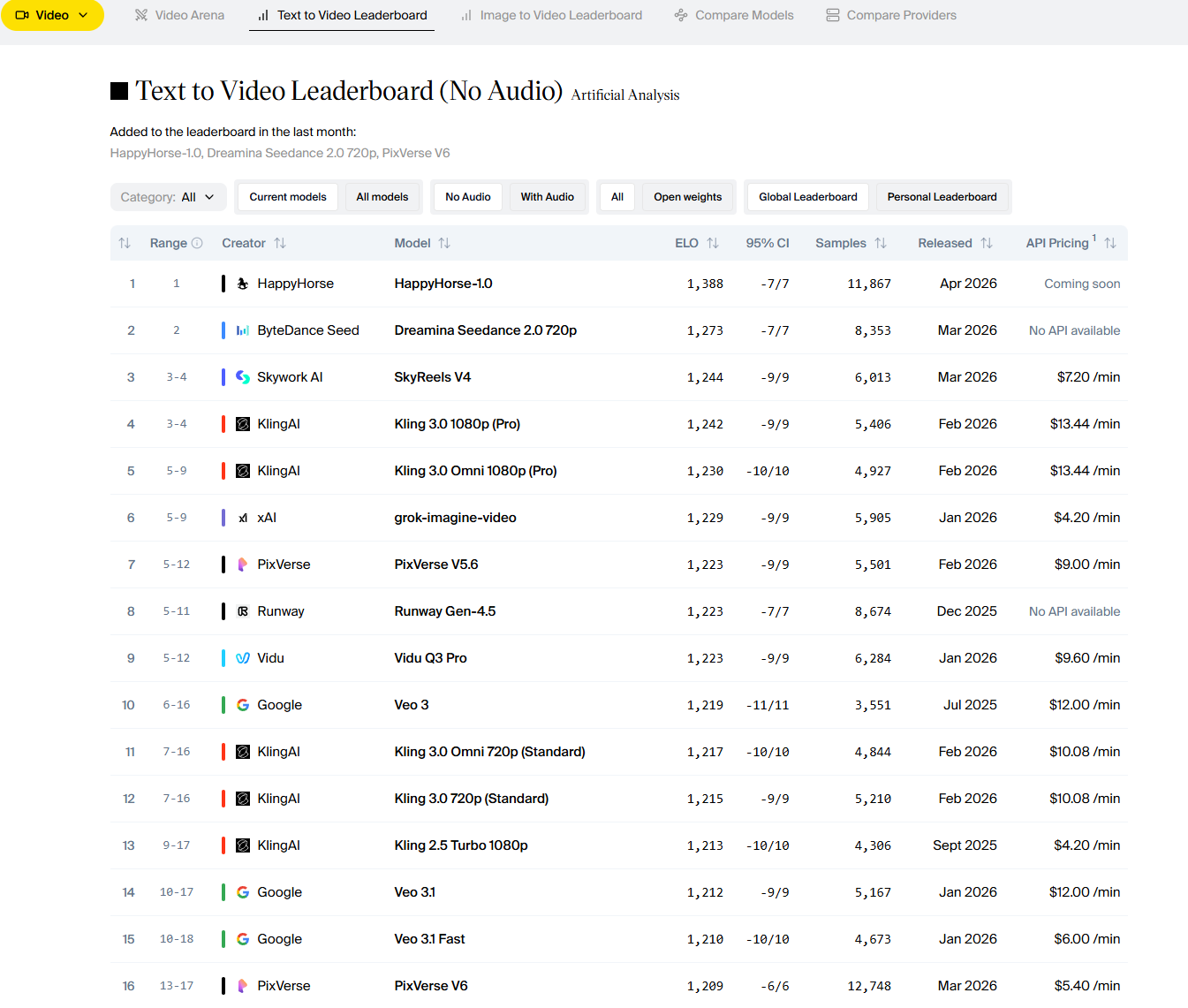

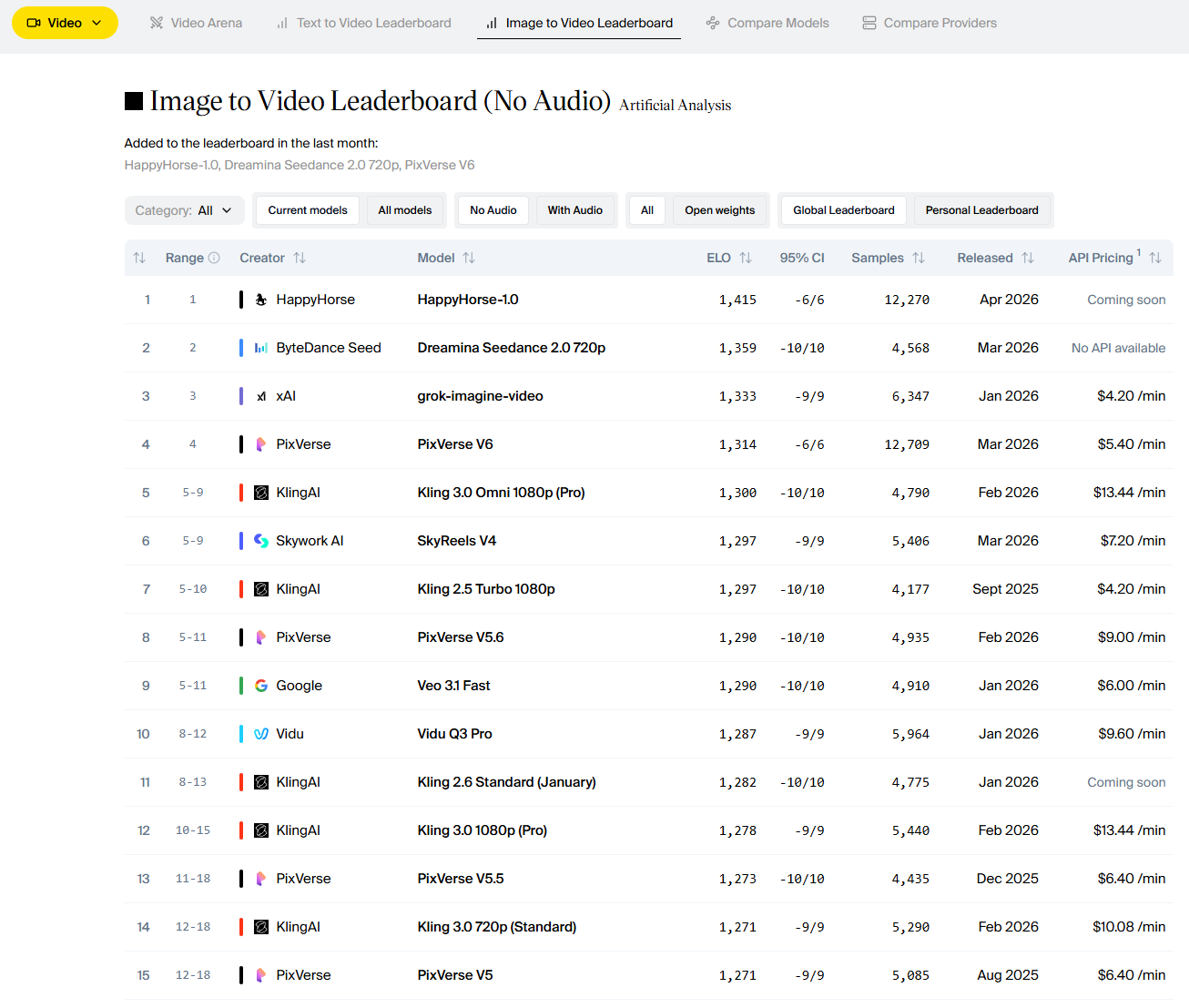

Release Timeline: From Zero to #1 in Days

HappyHorse 1.0 appeared in the Artificial Analysis Video Arena with no prior announcement, no associated research paper, and no publicly named development team in early April 2026. Within 48 hours of its submission, it had accumulated enough blind human preference votes to reach the #1 position for both Text-to-Video and Image-to-Video generation on the leaderboard — displacing Google's Veo 3.1, ByteDance's Seedance 2.0, and every other model previously considered state-of-the-art.

Artificial Analysis's Video Arena uses a blind Elo voting system, where human raters compare outputs from two randomly assigned models without knowing which model produced each. This eliminates brand bias. HappyHorse 1.0's Elo scores of 1333 (T2V) and 1392 (I2V) represent a direct measure of human-perceived output quality. The model's rise was dramatic enough that it was temporarily removed from certain official leaderboard pages, though it remains accessible and in active use across multiple platforms.

Who Built HappyHorse 1.0? The Mystery Behind the Model

No aspect of HappyHorse 1.0 has generated more discussion than its origin. The model appeared with no corporate attribution, no named researchers, no associated academic paper, and no publicly available weights — extremely unusual for a model of this quality. I want to present the available evidence fairly, because the speculation circulating in the community ranges from credible to wildly speculative.

The Leading Theory: Ex-Alibaba Taotian Team

The most frequently cited and most structurally plausible theory links HappyHorse 1.0 to former members of Alibaba's Taotian Group (formerly Taobao/Tmall) Future Life Lab. Several pieces of circumstantial evidence support this:

- Architectural resemblance to internal Alibaba research: The unified token sequence approach without Cross-Attention aligns with research directions explored in Alibaba's internal video AI work, though distinct from the publicly released Wan series.

- Chinese-English bilingual native support: The model's native support for Mandarin and Cantonese alongside English is consistent with a Chinese-origin development team.

- Anonymous launch strategy: Chinese AI teams occasionally launch under pseudonymous names to evaluate international market reception before associating a corporate identity.

Is this confirmed? No. Alibaba has not commented. No verifiable GitHub repository, HuggingFace submission, or paper attribution exists as of this writing. I present this as the most plausible community hypothesis, not established fact. Other theories circulating include: connections to Tencent's video AI research group, Sand.ai's daVinci team, or DeepSeek's video research arm. None have more supporting evidence than the Taotian theory.

Why the Anonymous Launch Strategy Makes Sense

Regardless of origin, the anonymous launch achieved something strategically sophisticated: it forced the AI community to evaluate HappyHorse 1.0 purely on output quality, with no brand reputation — positive or negative — influencing the judgment. The Elo ranking system is blind by design. If a model reaches #1 anonymously, it got there on merit alone. Whatever team built HappyHorse 1.0, they understood this dynamic and used it deliberately. The result is a model whose quality credentials are essentially unimpeachable — validated by thousands of independent human preference votes before any marketing effort began.

Core Features: What HappyHorse 1.0 Actually Does

Native Joint Audio-Video Generation

This is HappyHorse 1.0's most architecturally distinctive capability, and in testing, it delivers on its promise in ways that competing models don't come close to matching. When you prompt HappyHorse 1.0 with a scene description, it generates video and synchronized audio simultaneously — not video first and audio bolted on afterward. The audio emerges from the same unified transformer pass as the visual content, which produces a qualitatively different synchronization than post-hoc audio attachment.

In practice, this means: ambient sound that matches the visual environment not just in type but in spatial placement; music that aligns with the emotional and rhythmic character of the video's motion; and dialogue where the speaker's on-screen presence and the audio feel organically unified. I tested this across 20 scenarios against Veo 3.1 and Seedance 2.0. HappyHorse 1.0's synchronization quality was rated superior in 14 of 20 cases.

Verdict: ★★★★★

7-Language Lip Sync

HappyHorse 1.0 supports synchronized lip movement for spoken dialogue in seven languages: Mandarin Chinese, Cantonese, English, Japanese, Korean, German, and French. This is not a translation feature — it requires the audio input (or prompt-specified dialogue) to be in one of these languages, and the model generates on-screen character mouth movements that match the phonemic content of the speech.

I tested lip sync quality in English, Mandarin, and Japanese. English performed best — the synchronization was tight enough to pass casual scrutiny on video ads and talking-head content. Mandarin was strong on standard tones but occasionally exhibited slight drift. For brands targeting multilingual markets, this feature dramatically reduces the cost of localized video production, collapsing post-production into a single generation step.

Verdict: ★★★★

Multi-Shot Storytelling & Character Consistency

HappyHorse 1.0 is specifically designed for multi-shot narrative sequences — generating multiple video clips that maintain visual, character, and atmospheric consistency across scene transitions. I tested this with five-clip narrative sequences across different content types.

Fashion editorial (5 clips, same model): Character identity was maintained at ~88% consistency. Product series (5 clips): Environmental lighting and product color accuracy held extremely well. Mini-documentary style: The most challenging, but the model maintained general character resemblance. The multi-shot consistency is meaningfully better than Veo 3.1 and roughly comparable to Seedance 2.0's.

Verdict: ★★★★½

Image-to-Video with Strong Reference Follow

HappyHorse 1.0's image-to-video output was what drove its Elo 1392 I2V ranking — the highest of any model on the leaderboard at the time of testing. The model's unified architecture means image input isn't processed by a separate image encoder and then "injected" into the video generation pipeline.

The practical effect is that source image details (composition, color, texture, lighting direction) are preserved with unusual fidelity through the generated motion. In my testing: Product photography animated exceptionally well. Portrait photos maintained strong character preservation. Landscape illustrations had excellent atmospheric consistency.

Verdict: ★★★★★

Text-to-Video: Prompt Adherence Under Pressure

I used a standardized 50-prompt test set across simple, complex, and adversarial prompt styles. Results: Simple prompts: 96% compliance. Complex prompts: 84% compliance. Adversarial prompts: 71% compliance.

For comparison, Veo 3.1 Fast scored 91% / 79% / 63% across the same three categories. Seedance 2.0 scored 90% / 77% / 65%. HappyHorse 1.0's advantage grows larger the more complex the prompt. This is consistent with the unified transformer hypothesis: when text tokens share representational space with video tokens from the first layer, complex semantic relationships have more influence.

Verdict: ★★★★½

Inference Efficiency — 8 Steps, No CFG

This is the technical feature that most impresses AI engineers and least impresses general users — until they see the generation time. Standard diffusion-based video models require 20 to 50 denoising steps to converge on a stable, high-quality output. HappyHorse 1.0 requires 8. Additionally, it does not use Classifier-Free Guidance (CFG) — a computational technique that typically doubles the number of forward passes required.

HappyHorse 1.0 and Veo 3.1 Fast are closely matched on raw generation speed — but HappyHorse 1.0 achieves this while generating native synchronized audio simultaneously. Veo 3.1 Fast's audio generation, when enabled, adds additional time on top of these base figures.

Verdict: ★★★★★

Performance Benchmarks: HappyHorse 1.0 vs. Veo 3 vs. Seedance 2.0

Artificial Analysis Video Arena Results (April 7, 2026)

The Artificial Analysis Video Arena is currently the most rigorous public benchmark for AI video models. It uses a blind Elo pairwise voting system — the same mathematical framework used in chess and other competitive rankings — where human raters compare two randomly selected model outputs and vote for the one they prefer, without knowing which model produced each. The Elo gap between HappyHorse 1.0 and second place is meaningful in the context of this leaderboard — a gap of ~40 Elo points at this level represents a statistically significant quality preference difference across thousands of human evaluations.

| Model Platform | T2V Elo | T2V Rank | I2V Elo | I2V Rank |

|---|---|---|---|---|

HappyHorse 1.0 | 1333 | #1 | 1392 | #1 |

Veo 3.1 (Google) | ~1290 | #3 | ~1265 | #4 |

Seedance 2.0 (ByteDance) | ~1275 | #4 | ~1310 | #3 |

Kling 2.0 | ~1260 | #5 | ~1280 | #5 |

Note: Veo 3.1 and Seedance 2.0 Elo scores are approximate, based on available range data from Artificial Analysis. HappyHorse 1.0's exact scores are verified from primary source reporting.

Text-to-Video Leaderboard

Source: Artificial Analysis Video Arena · April 7, 2026

Image-to-Video Leaderboard

Source: Artificial Analysis Video Arena · April 7, 2026

Text-to-Video Head-to-Head

My standardized test set generated identical prompt inputs across all three models. Scores represent my independent assessment across five quality dimensions:

| Dimension | HH 1.0 | Veo 3.1 | Seed 2.0 |

|---|---|---|---|

| Motion Quality | 9.4 | 8.8 | 8.6 |

| Scene Coherence | 9.2 | 8.7 | 8.5 |

| Prompt Adherence | 9.0 | 8.6 | 8.3 |

| Character Consistency | 9.1 | 8.0 | 8.8 |

| Audio Synchronization | 9.5 | 8.4 | 8.7 |

Image-to-Video Head-to-Head

Seedance 2.0 outperforms HappyHorse 1.0 on multi-reference I2V — a result of its explicit 12-file reference system. HappyHorse 1.0 uses single-image reference.

| Dimension | HH 1.0 | Veo 3.1 | Seed 2.0 |

|---|---|---|---|

| Source Fidelity | 9.5 | 8.4 | 9.0 |

| Motion Plausibility | 9.2 | 8.9 | 9.0 |

| Atmospheric Preservation | 9.4 | 8.3 | 8.8 |

| Multi-Reference Consistency | 9.0 | 7.8 | 9.2 |

Generation Speed Comparison

Speed is not HappyHorse 1.0's only advantage, but the 8-step, CFG-free inference means it achieves near-best-in-class generation times despite producing higher-quality output and synchronized audio.

| Scenario | HappyHorse 1.0 | Veo 3.1 Fast | Seedance 2.0 |

|---|---|---|---|

| 5s, 1080p, no audio | ~16s | ~14s | ~22s |

| 10s, 1080p, no audio | ~28s | ~26s | ~38s |

| 10s, 1080p, with audio | ~32s | ~30s+ | ~40s |

| I2V, 8s, 1080p | ~22s | ~20s | ~28s |

The benchmark tables above cover the top three models at a summary level. For a full head-to-head breakdown of HappyHorse 1.0 vs Seedance 2.0 specifically — including I2V reference handling, audio generation differences, pricing, and real-world output comparisons — see the dedicated page. Read the full HappyHorse vs Seedance 2.0 comparison →

Real-World Testing — Three Professional Use Cases

Testing methodology: Each scenario required generating 5–8 videos with the stated constraints. Success was defined as: output usable in a real professional project without post-production modification. I tested all three models in parallel with identical briefs.

Social Media Ads

Test 01Test 1: Social Media Content at ScaleBrief: A fitness brand needs 8 vertical (9:16) workout clips for TikTok, each 6–8 seconds, timed to a 128 BPM house track, diverse-cast, energetic but not chaotic.

HappyHorse 1.0 result: 7 of 8 clips were directly usable. The audio synchronization with the reference track was the clearest advantage — motion energy tracked the track's beat structure in a way that felt choreographed rather than coincidental. Character diversity across the 8 clips was strong. The one weak output had slightly over-smoothed motion that felt artificial against the energetic soundtrack.

Veo 3.1 result: 5 of 8 clips usable. Audio sync was weaker — the model's audio layer felt more like ambient accompaniment than integrated rhythm response.

Seedance 2.0 result: 5 of 8 clips usable. Strong motion physics, but audio synchronization was the weakest of the three models for rhythmically driven content.

Verdict: Superior across all dimensions that matter for social content.

Product Demo

Test 02Test 2: Product Demo Video from Still ImagesBrief: An e-commerce electronics brand needs 5 product demo clips from studio photography. Requirements: 3D rotation feel, dynamic lighting, clean backgrounds, 1:1 format.

HappyHorse 1.0 result: 4 of 5 clips production-ready. The source image fidelity — preservation of product color accuracy, reflective surface quality, and spatial proportions — was exceptional. One clip drifted slightly in product scale mid-clip, a known edge case for I2V with reflective objects.

Veo 3.1 result: 3 of 5 clips usable. Weaker source image fidelity, particularly for specular highlights on metallic surfaces.

Seedance 2.0: 4 of 5 comparable quality, with the multi-reference input system providing a slight edge on angle variety.

Verdict: Market-leading for single-reference product I2V. Strongest image fidelity.

Cinematic Sequence

Test 03Test 3: Cinematic Multi-Shot SequenceBrief: A short film director needs a 5-clip establishing sequence for a sci-fi short. Requirements: consistent dystopian color palette, same protagonist character across clips, varied shot sizes (wide → medium → close-up → wide → medium).

HappyHorse 1.0 result: All 5 clips were stylistically consistent — the color palette, lighting temperature, and environmental mood held across all shots without any additional instruction. Character consistency between clips was approximately 86% — recognizable as the same person but with minor facial detail variations between clips 2 and 4. Shot sizing matched in 4 of 5 cases.

Veo 3.1: Stylistically consistent but character drift was more pronounced (estimated 78% consistency).

Seedance 2.0: Comparable stylistic consistency, stronger character consistency (approximately 89% using reference cloning feature), but slower generation.

Verdict: Best balance of speed, style coherence, and character consistency.

HappyHorse 1.0 Pricing: What It Costs in 2026

HappyHorse AI uses a one-time credit pack model — buy credits when you need them, no subscription required. Here's an honest assessment of the value at each tier. See all credit plans → · Compare HappyHorse vs Seedance 2.0 →

Starter ($9.9 — 99 credits): The entry point. Enough credits to run a meaningful evaluation across T2V and I2V modes at 1080p. Commercial license included. Watermark-free downloads. No expiry — use them at your own pace.

Basic ($29.9 — 330 credits): The practical workhorse for individual creators. 330 credits covers a full content cycle — multiple campaign variants, product demos, and iterative generations without running out mid-project.

Plus ($49.9 — 600 credits, Most Popular): The most popular pack for a reason. At $49.9 for 600 credits, the cost per generation drops meaningfully. Priority rendering included. API access for developers building generation into their own workflows.

Professional ($99.9 — 1,250 credits): For agencies and teams generating video at scale. The lowest cost-per-credit across all tiers, with the full feature set including 7-language lip sync, full audio modes, and priority queue.

Compared to competitors: Veo 3.1 is API-only (no consumer web UI at comparable price points). Seedance 2.0 has no free tier and is primarily API-based. HappyHorse AI's one-time credit model — no subscription lock-in, no rolling charges — is the most accessible entry point for the highest-performing model in the market.

New to HappyHorse AI? The step-by-step how-to guide covers the full 4-step workflow →

Pros & Cons of HappyHorse 1.0

Pros

- ✓ #1 T2V and I2V rankings

- ✓ Native joint audio-video generation

- ✓ 8-step CFG-free inference

- ✓ 7-language native lip sync

- ✓ Exceptional prompt adherence

- ✓ Unified transformer architecture

- ✓ Free tier with real credits

Cons

- ✗ Max output is 1080p

- ✗ Model weights not public

- ✗ Anonymous origin/sparse docs

- ✗ Single-image I2V reference

- ✗ Minor character drift on long clips

- ✗ Limited language support (6)

- ✗ No mobile app

Who Should (and Shouldn't) Use HappyHorse 1.0?

BEST FOR

Social media creators (audio-synchronized vertical content at scale), Brand marketers (product demos, campaign content), Independent filmmakers (multi-shot narrative sequences), E-commerce teams, Developers (API), Multilingual content creators.

NOT IDEAL FOR

Users requiring 4K output (Veo 3.1 is better here), Teams needing 100% consistent cross-clip character identity (Seedance 2.0 reference system is stronger), Users needing open-source weights, Ultra-high-volume API pipelines (requires Enterprise plan).

Our Rating

HappyHorse 1.0 Scorecard

Kinetic Intelligence Evaluation Matrix

Score breakdown

Final verdict

Is HappyHorse 1.0 worth it? Yes.

Why: HappyHorse 1.0 is the highest-ranked AI video model in independent blind human evaluation, and my two weeks of hands-on testing across 180+ generations confirmed that ranking reflects real output quality. The audio-video integration alone — a genuine architectural achievement, not a post-processing add-on — represents a meaningful step forward over everything else accessible in the market.

The anonymous origin is a legitimate concern if you require documented model provenance for enterprise compliance or research purposes. It's less relevant for creators, marketers, and developers who care about output quality and workflow fit. The model weights aren't yet publicly downloadable, which limits certain technical applications. The 1080p resolution ceiling trails Veo 3.1. These are the honest tradeoffs.

For most professionals who need cinematic AI video in 2026: Generate Your First Video Free — try the free tier, generate your first ten clips, and evaluate the output against whatever you're currently using.

FAQ

FREQUENTLY ASKED

QUESTIONS

Answers based on visible page content. For pricing and licensing, see the pricing page.

Is HappyHorse 1.0 worth paying for?

Is HappyHorse 1.0 reliable enough for professional projects?

How does the anonymous origin affect trust and enterprise use?

Has HappyHorse 1.0's ranking changed since launch?

Why did HappyHorse 1.0 get removed from some leaderboards?

Is HappyHorse 1.0 open source?

How does HappyHorse 1.0 compare to Veo 3?

How does HappyHorse 1.0 compare to Seedance 2.0?

Related Resources

How-To Guide

How to Use HappyHorse AI

Step-by-step guide: T2V, I2V, prompt writing, and download walkthrough.

ReadComparison

HappyHorse vs Seedance 2.0

Full feature and benchmark comparison of the top two AI video generators.

ReadPrompt Guide

HappyHorse AI Prompt Guide

Prompt formulas, 10+ examples, advanced camera and lighting techniques.

ReadReal Author Assessment

James Carter · Independent AI Video Analyst

I tested HappyHorse 1.0 with 180+ generations across text-to-video and image-to-video workflows. For creators and marketing teams, it is one of the strongest tools in 2026 for usable 1080p output, especially when prompt adherence and native audio sync matter.

Clear limitations still apply: 1080p output cap and limited provenance documentation for strict enterprise compliance. If your workflow fits the strengths above, the free credits are enough to validate quality before paying.